Model-Based Systems Engineering (MBSE) relies heavily on SysML to define complex system architectures. As systems grow in complexity, the models used to describe them become increasingly intricate. Traditional validation methods, which depend primarily on human review and static rule checks, often struggle to keep pace with the dynamic nature of modern engineering projects. This creates a bottleneck where model fidelity lags behind design intent.

Artificial Intelligence (AI) offers a pathway to address these challenges. By integrating AI-assisted validation into the SysML workflow, teams can automate the detection of inconsistencies, ensure requirement traceability, and verify parametric constraints with greater accuracy. This shift does not replace human engineers but augments their capabilities, allowing them to focus on high-level architectural decisions rather than repetitive error checking. The following guide explores the practical integration of these technologies into existing engineering processes.

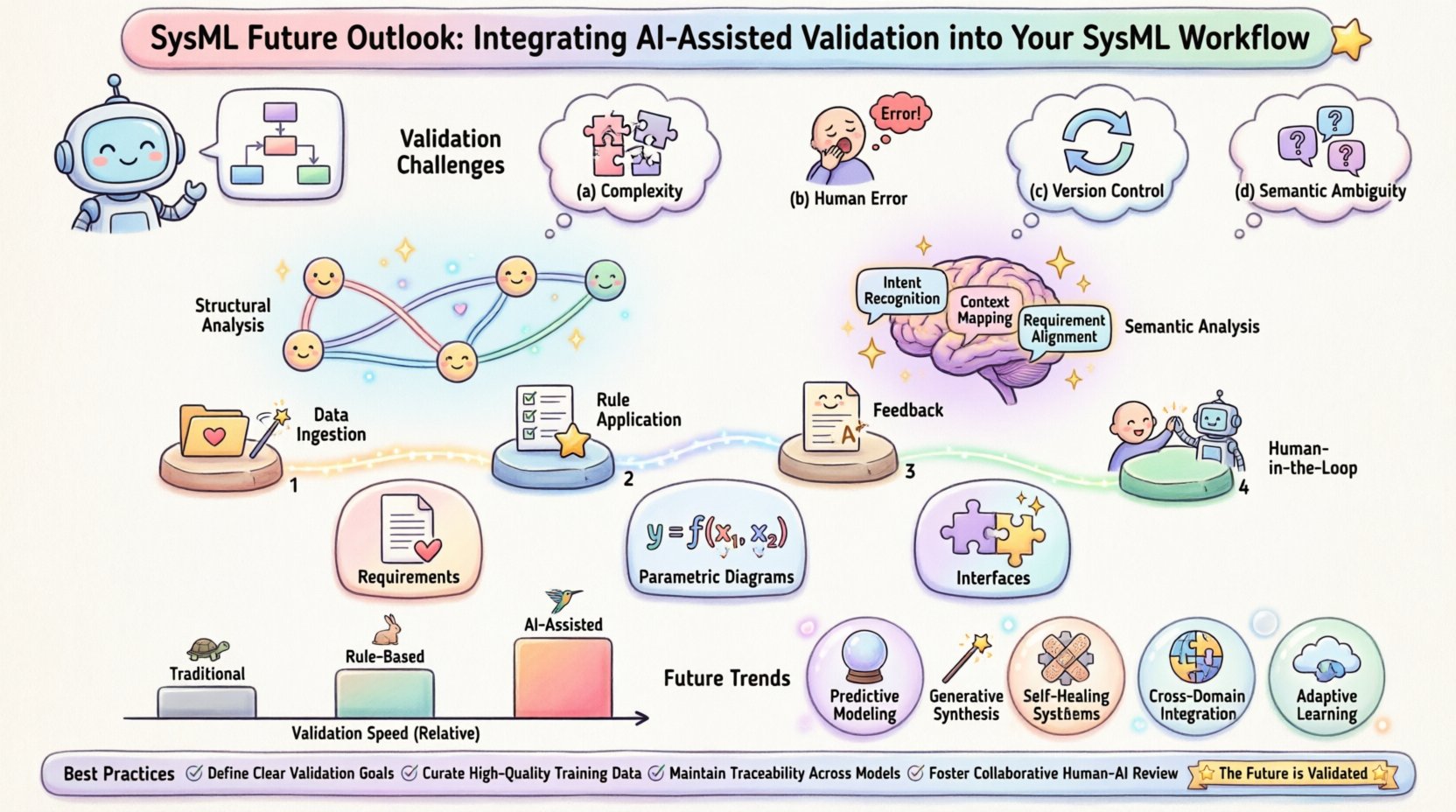

The Validation Challenge in Modern MBSE 🛠️

SysML models serve as the single source of truth for system design. However, maintaining the integrity of these models across a large organization is difficult. Several factors contribute to the validation gap:

- Scale and Complexity: Large systems involve thousands of blocks, relationships, and requirements. Manual verification of every link is time-prohibitive.

- Human Error: Engineers may inadvertently create circular references, miss traceability links, or define conflicting constraints during the modeling process.

- Version Control: As models evolve, ensuring that changes in one part of the system do not break assumptions in another is a significant logistical task.

- Semantic Ambiguity: Textual requirements often contain natural language nuances that are difficult to map to formal model structures without assistance.

Without automated support, these issues accumulate. A small inconsistency in a block definition can lead to a major failure during system integration. The goal of AI integration is to create a continuous feedback loop that catches these issues early in the development lifecycle.

Understanding AI-Assisted Validation 🧠

AI-assisted validation involves using machine learning and natural language processing techniques to analyze SysML models. The technology operates on two main levels: structural analysis and semantic analysis.

Structural Analysis

SysML models are essentially graphs consisting of nodes (blocks, requirements, interfaces) and edges (relationships). Structural AI uses graph neural networks to analyze the topology of the model. It can identify:

- Circular dependencies that prevent proper simulation.

- Isolated components that are not linked to the main system.

- Missing relationships between parent and child blocks.

- Violations of defined modeling standards or templates.

Semantic Analysis

Requirements are often written in natural language. Semantic AI uses Natural Language Processing (NLP) to understand the meaning of text. This allows the system to:

- Match textual requirements to specific model elements.

- Detect contradictory requirements (e.g., one requirement demands high speed, another demands low power consumption without trade-off justification).

- Identify vague or ambiguous language that needs clarification before coding begins.

Combining these approaches creates a robust validation engine that looks at both the shape and the meaning of the system design.

Integrating AI into Your SysML Workflow 🔗

Implementing AI validation requires a shift in how engineering teams manage their data. It is not merely a software addition but a process change. The integration can be broken down into four key phases.

1. Data Ingestion and Normalization

Before AI can process a model, the data must be accessible in a standardized format. SysML models are often stored in XMI (XML Metadata Interchange) files. The integration process must ensure that:

- Model files are extracted and parsed correctly.

- Metadata is preserved alongside the model structure.

- Natural language requirements are exported in a format readable by NLP models.

2. Automated Rule Application

This phase involves running the AI algorithms against the normalized data. Instead of waiting for a manual review, the system runs checks continuously. Key checks include:

- Syntactic Validity: Does the model conform to the SysML grammar?

- Traceability: Are all requirements linked to a design element?

- Constraint Satisfaction: Do parametric equations resolve to valid values?

3. Feedback and Reporting

The AI engine must communicate findings back to the engineer. This is not just a pass/fail metric. Reports should highlight:

- The specific element causing the error.

- The nature of the violation.

- Suggested remediation steps based on similar resolved issues.

4. Human-in-the-Loop Verification

AI is a tool, not a judge. Engineers must review AI-generated flags to confirm validity. False positives occur, and human judgment is required to interpret context. This step ensures that the AI learns from corrections and improves over time.

Key Areas for AI Intervention 🎯

Different parts of a SysML model benefit from different AI techniques. Understanding where to apply technology ensures the best return on investment.

Requirement Management

Requirements are the foundation of MBSE. AI can analyze the requirement set to ensure:

- Uniqueness: No two requirements state the same thing.

- Completeness: All necessary system functions are described.

- Consistency: No requirements contradict one another.

- Testability: Requirements are phrased in a way that allows for verification.

Parametric Diagrams

Parametric diagrams define the physical and mathematical constraints of the system. AI can validate:

- Equation Solvability: Ensuring equations can be solved without over-constraining the system.

- Variable Units: Checking that inputs and outputs match in terms of units (e.g., meters vs. seconds).

- Boundary Conditions: Verifying that the system behaves correctly at the edges of its operating envelope.

Interface Definitions

Interfaces define how components communicate. AI can check:

- Port Matching: Ensuring input ports match output ports in type and data flow.

- Signal Integrity: Analyzing signal definitions for completeness.

- Protocol Compliance: Checking if defined protocols align with industry standards.

Overcoming Implementation Hurdles ⚠️

Adopting AI in engineering workflows is not without challenges. Teams must navigate technical and cultural obstacles to succeed.

Data Quality and Privacy

AI models require high-quality training data. If historical models are riddled with errors, the AI will learn to accept those errors. Furthermore, engineering data is often sensitive. Teams must ensure that:

- Local processing is used for sensitive data to prevent leaks.

- Data is anonymized if cloud-based models are utilized.

- Data cleaning processes are established before ingestion.

Interpretability

Engineers need to trust the AI. If an AI flag a requirement as invalid, the engineer must understand why. Black-box models are difficult to adopt in safety-critical industries. Transparent models that explain the logic behind a flag are preferred.

Integration with Existing Tools

Most organizations have established workflows. The AI validation layer must integrate seamlessly with current systems. This means:

- Supporting standard file formats like XMI.

- Providing APIs for custom scripting.

- Operating within continuous integration pipelines.

Future Trends in Model Verification 🔮

As technology advances, the capabilities of AI-assisted validation will expand. Looking ahead, several trends are emerging.

- Predictive Validation: Instead of checking the current state, AI will predict future failures based on design trends. It might flag a design choice that looks good now but will cause maintenance issues later.

- Generative Design: AI will not only check models but also suggest improvements. It could propose alternative block structures that satisfy requirements more efficiently.

- Self-Healing Models: In advanced scenarios, the system might automatically fix minor inconsistencies, such as adding missing traceability links, after human approval.

- Cross-Domain Analysis: AI will connect SysML models with other data sources, such as CAD files or simulation logs, to provide a holistic view of system health.

Comparison of Validation Methods

The table below compares traditional validation methods with AI-assisted approaches to highlight the differences in scope and efficiency.

| Feature | Traditional Manual Review | Rule-Based Automation | AI-Assisted Validation |

|---|---|---|---|

| Speed | Slow | Fast | Very Fast |

| Scope | Limited by human capacity | Fixed rules only | Comprehensive (Structure + Semantics) |

| False Positives | Low | High (rigid rules) | Medium (requires tuning) |

| Context Awareness | High | None | High (via NLP) |

| Adaptability | High | Low | Medium (learning models) |

Best Practices for Adoption 📋

To successfully integrate AI validation without disrupting operations, follow these recommendations.

- Start Small: Begin with a specific subsystem or a single diagram type. Prove the value before scaling to the entire enterprise.

- Define Metrics: Establish clear key performance indicators (KPIs) to measure success, such as reduction in defect leakage or time saved per review cycle.

- Maintain Human Oversight: Never fully automate critical safety checks. Always keep an engineer in the loop to validate AI findings.

- Document Rules: Keep a clear record of what the AI is checking and how it makes decisions. This is vital for compliance and auditing.

- Train the Team: Ensure engineers understand how to interpret AI reports. Training reduces friction and increases adoption rates.

Conclusion

The integration of AI-assisted validation into SysML workflows represents a significant step forward for systems engineering. It addresses the growing complexity of modern systems by providing tools that can analyze models faster and more comprehensively than human teams alone. By focusing on structural integrity and semantic consistency, organizations can reduce errors, improve traceability, and accelerate delivery.

This transition requires careful planning, investment in data quality, and a commitment to continuous improvement. However, the long-term benefits in system reliability and engineering efficiency make the effort worthwhile. As AI capabilities mature, they will become an indispensable part of the Model-Based Systems Engineering toolkit.

Engineers who embrace these tools will find themselves better equipped to handle the demands of next-generation system development. The future of MBSE is not just about creating models; it is about ensuring those models are correct, consistent, and ready for implementation.