Building a technology architecture that supports growth requires more than just assembling components. It demands a strategic approach that anticipates demand, ensures resilience, and maintains performance under pressure. When organizations aim for scalability, they are not simply looking for speed; they are looking for endurance and adaptability. This guide explores the principles, frameworks, and structural elements necessary to plan technology architecture for scalable infrastructure. We will delve into how established methodologies like the TOGAF framework can guide these decisions without relying on specific vendor solutions.

Scalability is the ability of a system to handle increased load by adding resources. However, true architectural scalability involves designing systems where growth does not compromise stability. This requires a deep understanding of non-functional requirements, data flow, and the interplay between hardware and software layers. By focusing on foundational principles, teams can create environments that expand organically alongside business needs.

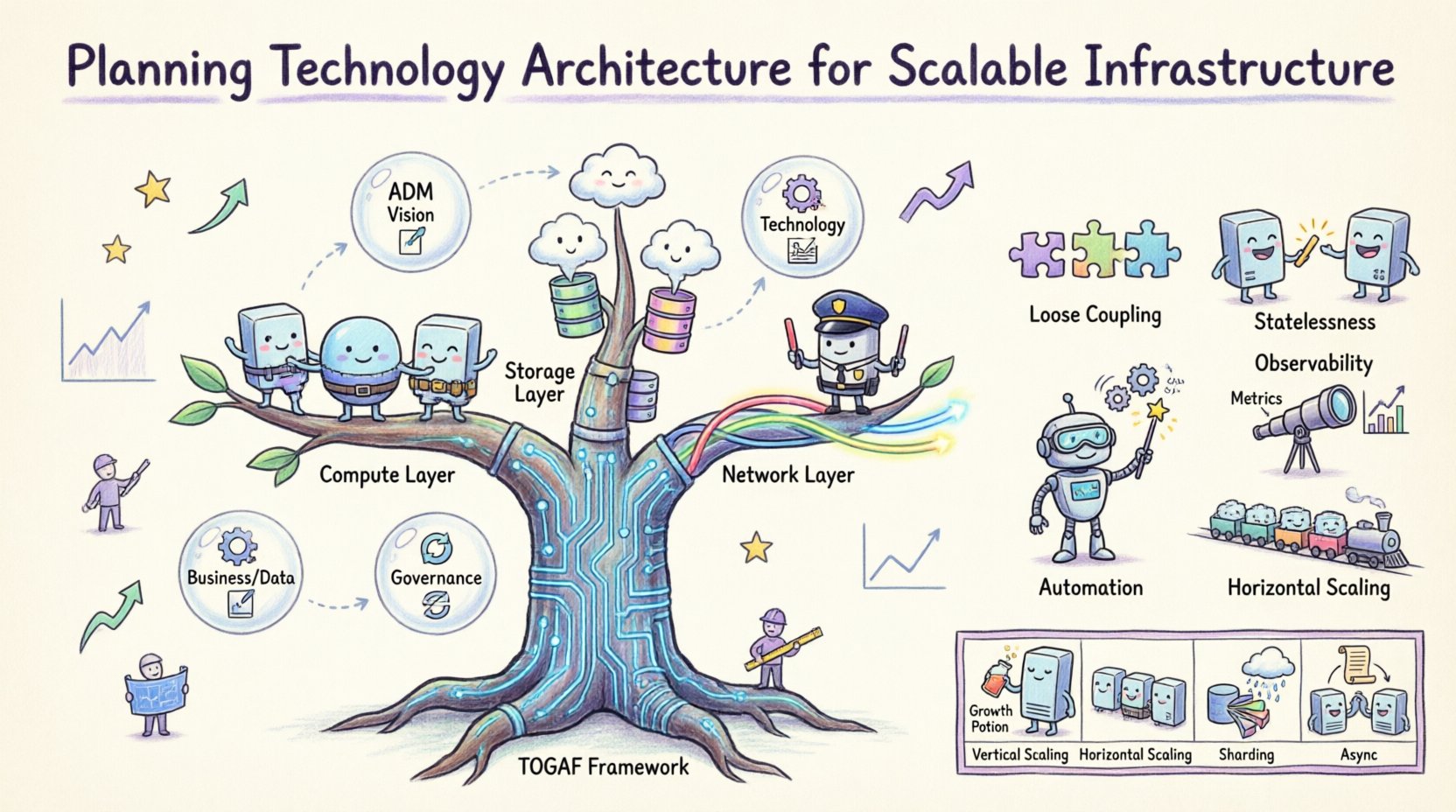

Understanding TOGAF in the Context of Infrastructure 🧭

The Open Group Architecture Framework (TOGAF) provides a structured approach to designing, planning, implementing, and governing enterprise information architecture. While often associated with high-level business strategy, its Application of the Architecture Development Method (ADM) is highly effective for infrastructure planning. TOGAF ensures that technical decisions align with business goals, preventing the creation of siloed systems that cannot communicate or scale efficiently.

When applying TOGAF to technology architecture, the focus shifts to the Technology Architecture phase. This phase defines the hardware, software, and network capabilities required to support the prioritized business processes. It bridges the gap between the logical business requirements and the physical implementation.

- Alignment: Ensures infrastructure supports current and future business objectives.

- Standardization: Reduces complexity by enforcing common technology standards.

- Integration: Facilitates smooth data exchange across different system layers.

- Manageability: Simplifies operations and maintenance over the system lifecycle.

Using a framework like this prevents ad-hoc scaling, where new resources are added without a coherent plan. Instead, it promotes a holistic view where scaling is a planned evolution rather than a reactive fix.

The Architecture Development Method (ADM) Cycle ⏳

The ADM cycle is the core of the TOGAF methodology. It is iterative, allowing architects to refine their designs as requirements evolve. For infrastructure planning, specific phases offer critical insights.

Phase A: Architecture Vision 🎯

This phase sets the stage by defining the scope and constraints. In infrastructure planning, this involves understanding the projected growth rates, regulatory requirements, and performance benchmarks. Stakeholders agree on the definition of scalability within the organization. Is the goal to handle ten times the current load, or to support new geographic regions? These questions shape the technical roadmap.

Phase B & C: Business & Information Systems Architecture 📊

Before designing servers or networks, one must understand the data and applications that will run on them. Phase B identifies the business processes. Phase C defines the data architecture and application architecture. Scalability depends heavily on how data is structured and accessed. If the data model is rigid, the infrastructure cannot scale effectively. This phase ensures that the logical requirements for data volume and transaction speed are documented early.

Phase D: Technology Architecture 🖥️

This is the critical phase for infrastructure planning. It translates the logical requirements from Phase C into physical specifications. It covers platform selection, network topology, and security architecture. The goal is to create a blueprint that supports the required throughput and availability. Key considerations include:

- Compute Resources: Determining the balance between processing power and memory.

- Storage Strategies: Deciding on local versus distributed storage solutions.

- Network Bandwidth: Ensuring sufficient capacity for data transfer between nodes.

- Resilience: Designing for redundancy to prevent single points of failure.

Phase E to H: Opportunities, Planning, Governance, and Change 🔄

These phases manage the implementation and ongoing evolution. Scalability is not a one-time event; it is a continuous process. Governance ensures that changes to the infrastructure do not degrade performance. Change management allows the architecture to adapt to new technologies or shifting market demands without requiring a complete rebuild.

Key Architectural Principles for Growth 📈

To achieve scalability, specific principles must guide every decision. These principles act as guardrails, ensuring that the architecture remains robust as it expands.

- Loose Coupling: Components should operate independently. If one service fails or requires scaling, it should not impact others. This allows for targeted resource allocation.

- Statelessness: Application servers should not store user session data locally. This enables any server to handle any request, simplifying load distribution.

- Automation: Manual scaling is slow and error-prone. Processes for provisioning and configuring resources should be automated.

- Observability: The system must provide clear visibility into its own health. Metrics, logs, and traces are essential for identifying bottlenecks before they cause outages.

- Horizontal Scaling: Adding more nodes to a cluster is often more effective and cost-efficient than increasing the power of a single node.

Adhering to these principles reduces technical debt and creates a foundation that can support rapid expansion.

Infrastructure Components Breakdown 💻

A scalable infrastructure is composed of several interdependent layers. Each layer must be designed to handle increased load without becoming a bottleneck.

Compute Layer

The compute layer is where the business logic executes. For scalability, the focus is on elasticity. Resources should be provisioned dynamically based on demand. This involves grouping computing resources into pools that can be expanded or contracted automatically. Key considerations include:

- Processor Architecture: Selecting instruction sets that optimize for the specific workload.

- Memory Management: Ensuring sufficient RAM to handle concurrent processes without swapping.

- Containerization: Using lightweight packaging to isolate applications and manage resource limits efficiently.

Storage Layer

Data growth is inevitable. The storage architecture must accommodate increasing volumes while maintaining low latency. Distributed storage systems are often preferred over centralized arrays for large-scale environments. They offer better fault tolerance and the ability to add capacity incrementally.

- Data Partitioning: Splitting data across multiple nodes to distribute the read and write load.

- Replication: Creating copies of data across different locations to ensure availability and speed up access.

- Caching: Storing frequently accessed data in fast memory layers to reduce database load.

Network Layer

The network acts as the connective tissue. If the network cannot keep up, the entire system slows down. Scalable network design focuses on bandwidth, latency, and routing efficiency.

- Load Balancing: Distributing incoming traffic across multiple servers to prevent overload.

- Content Delivery: Placing content closer to the user to reduce latency.

- Bandwidth Management: Prioritizing critical traffic to ensure essential services remain responsive.

Table: Scalability Patterns and Use Cases

| Pattern | Function | Best Used For |

|---|---|---|

| Vertical Scaling | Adding resources to existing nodes | Databases requiring high single-node power |

| Horizontal Scaling | Adding more nodes to the pool | Web applications and microservices |

| Sharding | Splitting data across databases | High-volume transactional data |

| Caching | Storing copies of data for fast access | Read-heavy workloads |

| Asynchronous Processing | Queuing tasks for later execution | Background jobs and notifications |

Data Management in High-Growth Environments 💾

Data is often the biggest constraint in scaling. As transaction volumes rise, database performance can degrade rapidly. Planning for data scalability requires a shift from traditional relational models to more flexible architectures.

Read Replicas: Creating copies of the primary database that serve read-only queries. This offloads the primary system and improves response times for users.

Database Sharding: This involves splitting a large database into smaller, faster, and more easily managed pieces called shards. Each shard is a separate database instance. This allows the system to scale out by adding more shards rather than upgrading a single massive server.

Event-Driven Architecture: Instead of systems polling each other for data, they react to events. This decouples components and allows each part of the system to scale independently based on its specific event load.

When designing data storage, architects must also consider data retention policies. Archiving old data to cold storage keeps the active system lean and fast. This ensures that high-performance resources are dedicated to current operational needs.

Network and Connectivity Considerations 🌐

A scalable infrastructure relies on a robust network. As the number of connected devices and services grows, network complexity increases. The design must account for latency, throughput, and security.

Microsegmentation: Dividing the network into smaller zones to limit the spread of security threats. This also allows for granular traffic control, ensuring that critical services get priority.

Multi-Region Deployment: Placing infrastructure in multiple geographic locations reduces latency for users in different regions. It also provides disaster recovery capabilities. If one region goes offline, traffic can be routed to another.

API Gateways: These act as a single entry point for all client requests. They handle authentication, rate limiting, and routing. This protects backend services from being overwhelmed by direct traffic.

Bandwidth Optimization: Compressing data transfers and minimizing payload sizes reduces the load on the network. Efficient protocols should be used to ensure maximum throughput with minimum overhead.

Governance and Lifecycle Management 🛡️

Without governance, scalability efforts can lead to chaos. Governance ensures that changes to the infrastructure are documented, reviewed, and approved. It maintains consistency across the organization.

- Change Control: Every modification to the infrastructure must be tracked. This prevents configuration drift and ensures that production environments match the design specifications.

- Cost Management: Scalability often increases costs. Governance ensures that resources are utilized efficiently and that spending aligns with budgetary constraints.

- Security Compliance: Security controls must scale with the infrastructure. As new nodes are added, they must automatically inherit security policies to prevent vulnerabilities.

Lifecycle management involves the entire journey of a resource from creation to retirement. Automated tools should handle the provisioning and decommissioning of resources. This reduces human error and ensures that unused resources do not incur unnecessary costs.

Assessing Risks and Mitigation Strategies ⚠️

Scaling introduces new risks. The more complex the system, the higher the potential for failure points. A proactive approach to risk management is essential.

- Single Points of Failure: Identify any component that, if it fails, brings down the system. Design redundancy for all critical components.

- Capacity Planning: Regularly assess current usage against projected growth. Ensure that resources can be added before demand exceeds capacity.

- Disaster Recovery: Test backup and recovery procedures regularly. In a crisis, the ability to restore service quickly is vital.

- Vendor Lock-in: Relying on a single provider can limit flexibility. Use open standards where possible to ensure portability and negotiation power.

Regular stress testing and load testing help identify weaknesses before they become critical issues. By simulating peak loads, teams can verify that the infrastructure performs as expected under pressure.

Preparing for Future Expansion 🔮

The technology landscape changes rapidly. An architecture designed today must be adaptable to tomorrow’s requirements. This involves staying informed about emerging technologies and industry trends.

- Modularity: Design systems as modular components. This allows parts of the system to be upgraded or replaced without affecting the whole.

- Interoperability: Ensure that different systems can communicate using standard protocols. This facilitates integration with new tools and services.

- Scalable Security: Security measures must evolve alongside the infrastructure. New threats require new defenses, and the architecture must support these updates seamlessly.

- Continuous Improvement: Treat architecture as a living document. Regular reviews ensure that the design remains aligned with business goals and technical realities.

Investing in documentation and knowledge sharing ensures that the team understands the architecture. When personnel changes occur, the institutional knowledge remains, preserving the integrity of the system.

Final Considerations for Architects 🏁

Planning technology architecture for scalable infrastructure is a complex task that requires balancing competing demands. Performance, cost, security, and flexibility must all be considered. By leveraging structured methodologies and adhering to proven principles, organizations can build systems that stand the test of time.

The journey does not end with deployment. Continuous monitoring and optimization are required to maintain scalability. As business needs evolve, the architecture must evolve with them. This ensures that the technology remains an enabler of growth rather than a constraint.

Focus on the fundamentals: clean design, automation, and observability. These pillars support a resilient infrastructure capable of handling the challenges of the future. With careful planning and disciplined execution, scalable systems become a reality that drives business success.