Systems modeling with SysML (Systems Modeling Language) is designed to handle the intricacies of complex engineering challenges. However, a common pitfall arises when models become bloated, difficult to navigate, and ultimately lose their value as communication tools. This phenomenon, often referred to as model bloat, can obscure critical design decisions and hinder verification efforts. The goal is not to reduce the fidelity of the model, but to align its complexity with the actual needs of the system lifecycle.

When behavioral models become over-engineered, they often suffer from excessive nesting, redundant states, and unclear data flows. This guide provides a structured approach to identifying and resolving these issues. By applying disciplined modeling practices, teams can ensure their SysML artifacts remain robust, maintainable, and clear.

🔍 Diagnosing the Symptoms of Model Over-Complexity

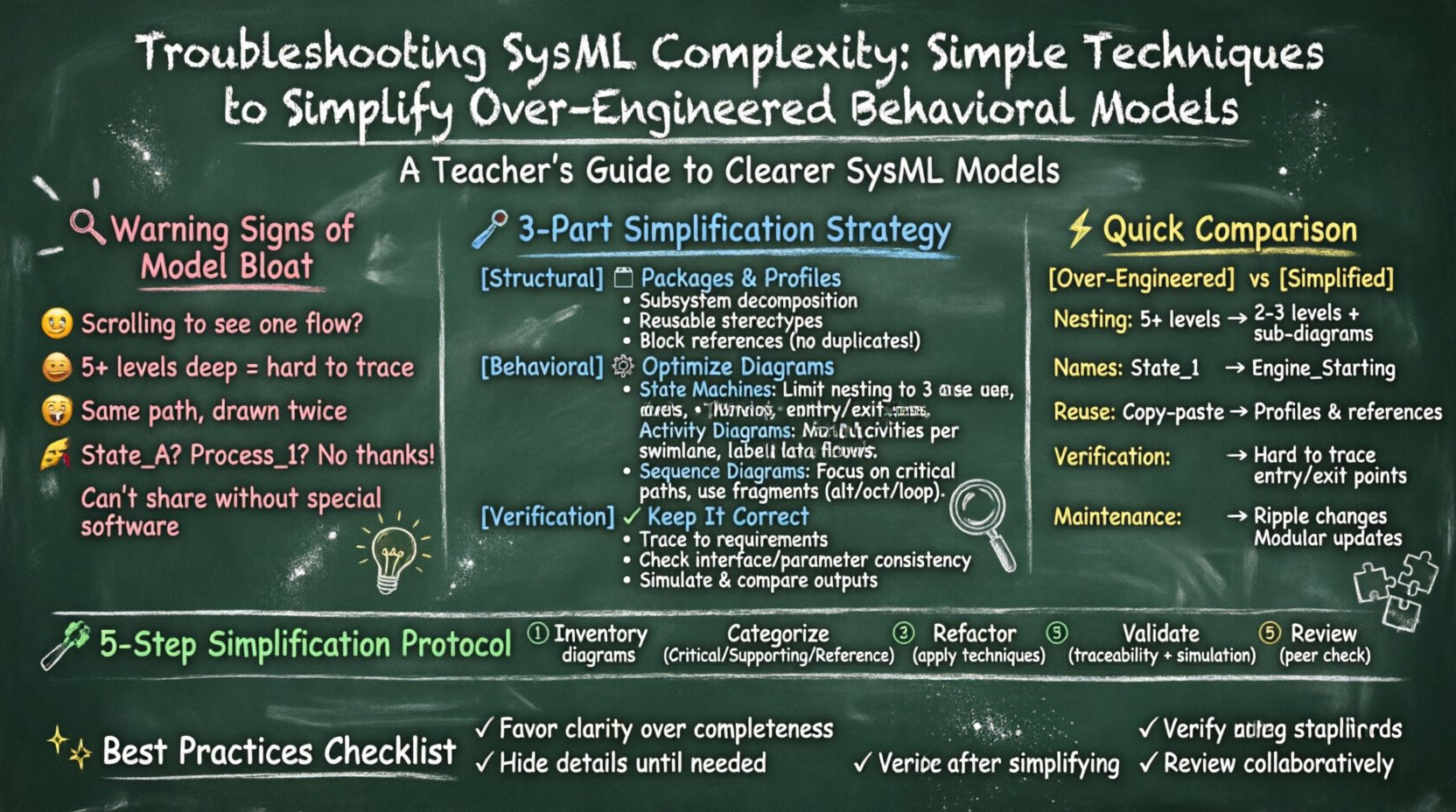

Before attempting to simplify, one must recognize the indicators that a model has drifted beyond a manageable scope. Complexity is not merely a function of size; it is a function of cognitive load. The following signs often point to behavioral models that require attention:

- Diagram Clutter: State machines or activity diagrams that require horizontal or vertical scrolling to view a single logical flow.

- Deep Nesting: States or activities buried five or more levels deep within compound structures, making entry and exit conditions difficult to trace.

- Redundant Logic: Identical transition paths repeated across multiple diagrams without modular reuse.

- Vague Naming Conventions: Labels such as “Process_1” or “State_A” that provide no semantic context.

- Tool Dependency: The model becomes unusable without specific software features, breaking portability across environments.

Addressing these symptoms requires a shift in mindset from “modeling everything” to “modeling what is necessary.” The following sections detail specific techniques to achieve this balance.

🧱 Structural Strategies for Simplification

Behavioral models do not exist in isolation. They rely on structural definitions to function correctly. Often, behavioral complexity stems from structural ambiguity. The first step in troubleshooting is to review the underlying structural support.

1. Leveraging Packages and Profiles

Organizing model elements into logical packages is fundamental. When behavioral diagrams become too large, consider breaking them down by system hierarchy or subsystem.

- Subsystem Decomposition: Instead of one massive state machine for an entire vehicle system, create individual state machines for the propulsion system, navigation system, and user interface. Connect them via well-defined interfaces.

- Custom Profiles: Define reusable stereotypes for common behaviors. If multiple subsystems share a “Safety Monitor” behavior, define it once as a profile element and apply it where needed.

- Reference Models: Use block references to link behavior to structure without duplicating the definition. This keeps the behavioral view clean while maintaining structural integrity.

2. Abstraction and Refinement Levels

Not every detail needs to be visible in every view. Adopt a multi-level abstraction strategy.

- High-Level Views: These focus on major milestones and external interactions. They omit internal state transitions.

- Mid-Level Views: These detail the logic of specific subsystems.

- Low-Level Views: These contain the atomic logic required for implementation verification.

By segregating these views, stakeholders can review the model at the appropriate depth without being overwhelmed by irrelevant details.

⚙️ Behavioral Model Optimization Techniques

Once the structure is sound, focus on the behavior itself. SysML offers specific diagram types for behavior: State Machine Diagrams, Activity Diagrams, and Sequence Diagrams. Each has unique pitfalls that lead to complexity.

3. Streamlining State Machine Diagrams

State machines are the most common source of behavioral complexity. They can easily spiral into spaghetti-like structures if not managed.

- Minimize Compound States: While compound states are useful, excessive nesting makes transition logic hard to verify. Limit nesting depth to three or four levels.

- Use Entry and Exit Actions: Avoid placing logic on every transition. Use entry actions to initialize a state and exit actions to clean up, reducing the number of edges on the diagram.

- Consolidate Final States: Avoid having multiple final states scattered across a diagram. Where possible, funnel behavior into a single terminal state or a well-defined set of termination points.

- Guard Condition Discipline: Keep guard conditions simple. If a guard condition is a complex boolean expression, consider moving the logic to a separate activity or using a parameter.

4. Refining Activity Diagrams

Activity diagrams represent workflows and data flows. They often become cluttered with excessive swimlanes or object nodes.

- Swimlane Management: Limit swimlanes to distinct roles or subsystems. If a swimlane contains more than 10 activities, consider splitting the diagram or creating a sub-activity.

- Data Flow Clarity: Ensure that object flows are explicitly labeled. Avoid “invisible” data passing where the source and destination are not obvious.

- Parallelism: Use fork and join nodes only when true parallelism exists. If logic is sequential, do not use parallel constructs. This reduces cognitive load when tracing execution paths.

5. Sequence Diagram Readability

Sequence diagrams can become unwieldy when representing complex interactions over long timeframes.

- Focus on Critical Paths: Do not attempt to model every possible interaction. Focus on the primary use case and the critical error handling paths.

- Fragment Usage: Use combined fragments (alt, opt, loop) to represent variations without duplicating lifelines. This keeps the diagram compact.

- Abstraction of Lifelines: Group related participants under a composite lifeline if they function as a single logical unit.

📊 Comparison of Modeling Approaches

Understanding the difference between an over-engineered approach and a simplified approach is crucial. The table below outlines the contrast between common practices.

| Feature | Over-Engineered Approach | Simplified Approach |

|---|---|---|

| State Nesting | Deep nesting (5+ levels) for every detail | Shallow nesting (2-3 levels) with separate diagrams for sub-logic |

| Naming | Generic names (e.g., “State_1”) | Semantic names (e.g., “Engine_Starting”) |

| Reuse | Duplicate logic across diagrams | Use of references and profiles |

| Verification | Difficult to trace paths manually | Clear entry/exit points and labeled transitions |

| Maintenance | High cost to update; ripple effects | Modular updates; localized changes |

🔎 Verification and Validation of Simplified Models

Simplification should not compromise correctness. Once changes are made, the model must still satisfy the system requirements. The process of verification ensures that the simplified model behaves identically to the complex version.

1. Requirement Traceability

Every state, transition, or activity should be traceable to a specific system requirement. If a simplification removes a detail, verify that the requirement is still met by another part of the model. Use requirement links to maintain this connection.

2. Consistency Checks

Perform consistency checks across the model.

- Interface Consistency: Ensure that inputs and outputs match between parent and child behaviors.

- Parameter Consistency: Verify that data types remain consistent across transitions.

- State Coverage: Ensure that all possible states can be reached and that no deadlocks are introduced during simplification.

3. Simulation and Analysis

If the environment supports simulation, run the simplified model against the same test cases used for the complex model. Compare the outputs. If the outputs match, the simplification is valid. This provides objective evidence that the model remains functional.

🤝 Collaboration and Review Processes

Complexity often creeps in when individual contributors model in isolation. Establishing a collaborative review process helps catch complexity early.

1. Modeling Standards

Define a set of rules that the team must follow. These rules act as a guardrail against complexity.

- Maximum Depth: Set a rule that no diagram can exceed a certain number of nodes.

- Naming Standards: Mandate specific naming conventions for states, transitions, and activities.

- Diagram Limits: Limit the number of diagrams per subsystem to prevent sprawl.

2. Regular Model Reviews

Schedule regular reviews where the sole purpose is to assess complexity, not functionality. Ask questions like:

- Can this diagram be understood by a new engineer within 15 minutes?

- Are there any redundant paths that can be merged?

- Is the abstraction level appropriate for the current stakeholder?

3. Documentation of Decisions

When simplifying, document the rationale. If a detail was removed, explain why it is safe to remove. This prevents future confusion and ensures that knowledge is retained even if the model changes over time.

🛠️ Step-by-Step Simplification Protocol

For teams ready to tackle their models, follow this structured protocol.

- Inventory: List all behavioral diagrams and their sizes.

- Categorize: Mark diagrams as “Critical,” “Supporting,” or “Reference.”

- Critical diagrams require high fidelity.

- Supporting diagrams can be abstracted.

- Reference diagrams serve as a lookup.

- Refactor: Apply the techniques discussed above (nesting reduction, naming standardization, profile usage).

- Validate: Run consistency checks and requirement traceability analysis.

- Document: Record the changes and the reasoning behind them.

- Review: Have a peer review the simplified model.

🚀 Long-Term Maintenance Strategies

Simplification is not a one-time event. Models evolve as requirements change. To maintain simplicity over time:

- Iterative Refinement: Do not attempt to model the entire system at once. Build iteratively, refining as you go.

- Automated Checks: Where possible, use automated validation scripts to flag diagrams that exceed size limits or naming conventions.

- Training: Ensure all modelers understand the principles of abstraction and simplification. Consistency in skill level reduces variability in model quality.

- Version Control: Treat model files like code. Use version control to track changes. This allows you to revert if a simplification introduces errors.

📝 Summary of Best Practices

Avoiding the trap of over-engineering requires discipline and a clear strategy. By focusing on structure, abstraction, and verification, teams can create behavioral models that are both powerful and manageable.

- Keep it Simple: Favor clarity over completeness in early stages.

- Use Abstraction: Hide details until they are needed.

- Standardize: Enforce naming and structural conventions.

- Verify: Ensure simplification does not break functionality.

- Collaborate: Use reviews to catch complexity before it spreads.

By adhering to these principles, organizations can ensure that their SysML models remain valuable assets throughout the product lifecycle, rather than becoming burdensome artifacts that hinder progress.