Building a robust database infrastructure requires precision at every stage of development. The Entity Relationship Diagram (ERD) serves as the blueprint for this structure. It defines how data entities interact, how information flows, and how integrity is maintained throughout the system lifecycle. Skipping a thorough review of the ERD can lead to costly refactoring, data corruption, and performance bottlenecks down the line. This guide provides a detailed, actionable checklist to validate your schema before committing to implementation.

Database architects and developers must approach schema design with a critical eye. The cost of correcting a structural error in production is significantly higher than the effort required to fix it during the design phase. By following a structured review process, teams ensure that the resulting database supports business logic, adheres to normalization principles, and remains scalable.

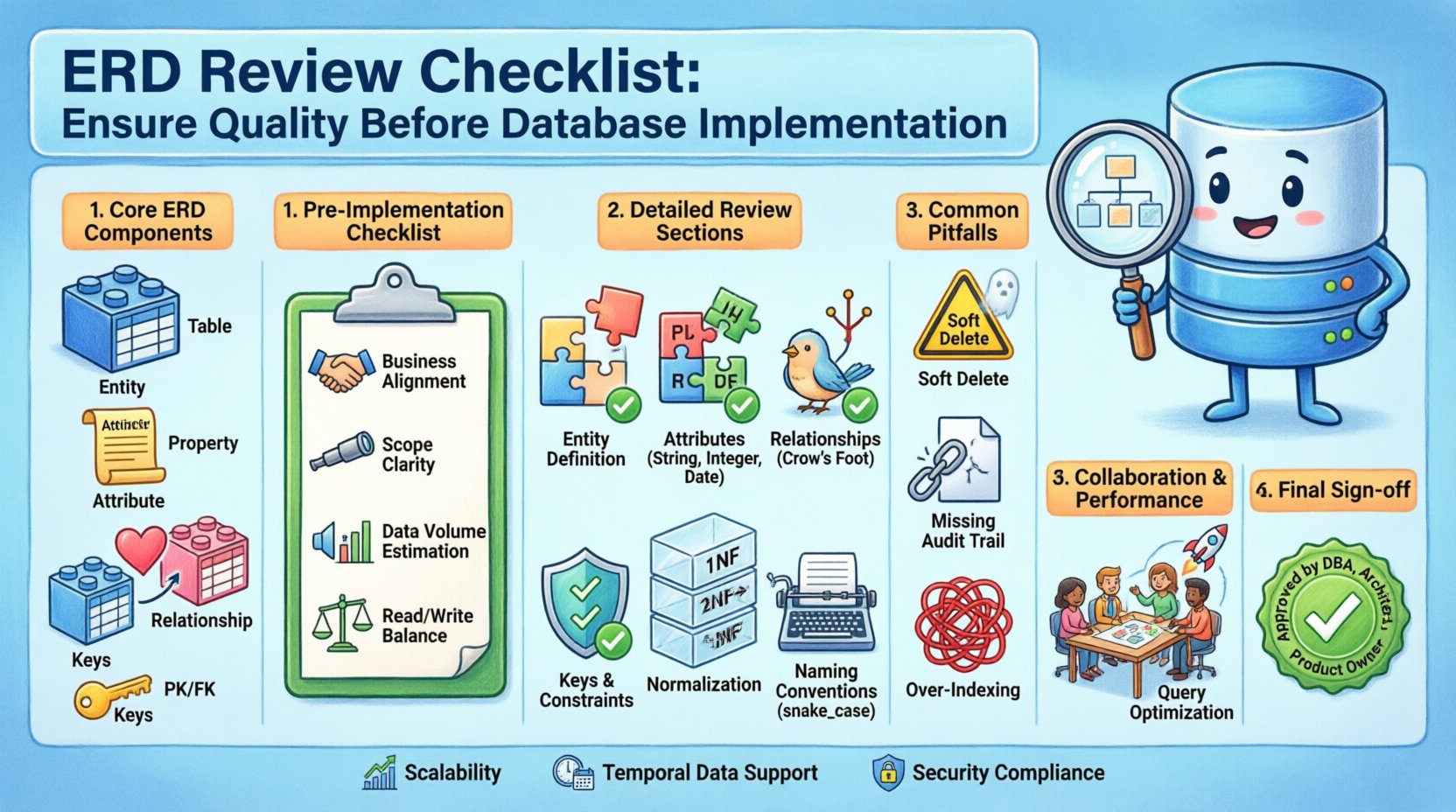

Understanding the Core Components of an ERD 🔍

Before diving into the checklist, it is essential to understand the fundamental building blocks that make up a standard Entity Relationship Diagram. These components form the vocabulary of your data model.

- Entities: These represent the real-world objects or concepts about which data is stored. In a relational context, entities typically map to tables.

- Attributes: These describe the properties or characteristics of an entity. They map to columns within a table.

- Relationships: These define the associations between entities. They indicate how data in one table connects to data in another.

- Cards and Keys: Cardinality defines the numerical relationship between entities (e.g., one-to-one, many-to-many). Keys ensure unique identification and connectivity.

A high-quality ERD must clearly articulate these elements. Ambiguity in the diagram translates directly to ambiguity in the code, leading to implementation errors.

Pre-Implementation Validation Steps ✅

Before applying any specific checklist items, the overall context of the database must be aligned with business requirements. This phase ensures the model is fit for purpose.

- Business Requirement Alignment: Verify that every entity and relationship maps to a specific business rule or user story.

- Scope Definition: Confirm the boundaries of the data. Are we designing for a single application, a microservice, or an enterprise-wide warehouse?

- Data Volume Estimation: Consider the expected volume of records. This influences decisions on indexing and partitioning strategies.

- Read/Write Ratio: Understand the workload profile. A read-heavy application may require denormalization, whereas a write-heavy system prioritizes strict integrity.

Detailed ERD Review Checklist 📝

This section breaks down the specific technical attributes that require scrutiny. Use this list as a verification tool during your design review sessions.

1. Entity and Table Definition

Every entity in the diagram must be distinct and well-defined. A common error is creating overlapping entities that should be merged, or splitting a single concept across multiple tables unnecessarily.

- Distinctness: Ensure each table represents a unique concept. Avoid tables that store similar data for different purposes without a clear distinction.

- Granularity: Check if tables are too granular. Excessive splitting can lead to complex joins and performance degradation.

- Naming Conventions: Verify consistency. Tables should use singular names (e.g.,

Customerinstead ofCustomers) to align with object-oriented mapping patterns. - Metadata: Ensure creation and modification timestamps are included in every table to support auditing and data lineage tracking.

2. Attributes and Data Types

Attributes define the nature of the data stored. Selecting the correct data type is critical for storage efficiency and query performance.

- Primary Data Types: Ensure integers, strings, and booleans are used correctly. Avoid using strings for dates or numbers.

- Length Constraints: Define maximum lengths for string fields. This prevents storage bloat and ensures consistency during input validation.

- Nullability: Explicitly define whether a field can be null. Most fields should not be nullable unless the business logic permits it.

- Default Values: Check if default values are necessary. For example, a status field might default to ‘active’ rather than requiring an initial insert.

- Enum Values: Where appropriate, use enumerated lists to restrict values. This prevents invalid data entry at the source.

3. Relationships and Cardinality

Relationships are the glue that holds the data model together. Errors here lead to orphaned records or data duplication.

| Relationship Type | Description | Implementation Note |

|---|---|---|

| One-to-One (1:1) | One record in Table A links to exactly one record in Table B. | Usually implemented by placing the Primary Key of A as a Foreign Key in B. |

| One-to-Many (1:N) | One record in Table A links to many records in Table B. | Place the Primary Key of A as a Foreign Key in B. |

| Many-to-Many (M:N) | Records in A can link to many in B, and vice versa. | Requires a junction table linking the two Primary Keys. |

- Cardinality Verification: Review the crow’s foot notation or equivalent to ensure the direction of the relationship is correct.

- Optionality: Distinguish between mandatory and optional relationships. A foreign key constraint should reflect whether a related record must exist.

- Recursive Relationships: Check for self-referencing tables (e.g., an

Employeetable linking to aManagerID within the same table). - Circular Dependencies: Ensure relationships do not create circular loops that complicate data loading or querying.

4. Keys and Constraints

Keys are the mechanism for uniqueness and connection. Without proper keys, data integrity collapses.

- Primary Keys: Every table must have a Primary Key. It should be unique and never null.

- Surrogate Keys: Consider using system-generated IDs (surrogate keys) instead of natural business keys. This avoids changes in business logic affecting the database structure.

- Foreign Keys: Verify that all foreign keys reference valid primary keys in parent tables.

- Unique Constraints: Identify fields that must be unique (e.g., email addresses, account numbers) but are not the primary key.

- Check Constraints: Look for logical rules that cannot be enforced by data types alone (e.g.,

start_datemust be beforeend_date).

5. Normalization

Normalization reduces redundancy and improves data integrity. While over-normalization can hurt performance, under-normalization creates anomalies.

- First Normal Form (1NF): Ensure atomic values. No repeating groups or arrays within a single cell.

- Second Normal Form (2NF): Ensure all non-key attributes are fully dependent on the primary key, not just part of it.

- Third Normal Form (3NF): Ensure no transitive dependencies. Non-key attributes should depend only on the primary key, not on other non-key attributes.

- Denormalization Strategy: If performance is a concern, document where and why denormalization is applied. This should be a conscious decision, not an oversight.

6. Naming Conventions

Consistent naming reduces cognitive load for developers and reduces the likelihood of errors.

- Table Names: Use singular nouns (e.g.,

Order, notOrders). - Column Names: Use snake_case for consistency (e.g.,

created_at). - Avoid Reserved Words: Ensure no column names conflict with SQL keywords (e.g.,

user,order,group). - Clarity: Names should be descriptive. Avoid abbreviations unless they are industry-standard.

Common Pitfalls to Avoid ⚠️

Even experienced designers can overlook critical details. Being aware of common traps helps in maintaining a clean schema.

- Ignoring Soft Deletes: Decide if data needs to be permanently deleted or logically marked as inactive. A

is_deletedflag is often safer than physical removal. - Missing Audit Trails: Ensure there is a mechanism to track who changed data and when. This is crucial for compliance.

- Over-Indexing: Adding too many indexes slows down write operations. Review the query patterns to justify index placement.

- Hardcoded Values: Avoid storing specific values like country codes as strings if they can be mapped to a reference table.

- Implicit Assumptions: Do not assume a field is optional if the business logic requires it. Document assumptions clearly.

Collaboration and Documentation 🤝

An ERD is not just a technical artifact; it is a communication tool. It must be understood by stakeholders, not just database administrators.

- Stakeholder Review: Have business analysts review the diagram to confirm it matches their mental model of the process.

- Version Control: Treat the ERD as code. Store it in version control to track changes over time.

- Documentation: Include a data dictionary alongside the diagram. Define what each field means and its allowed range.

- Change Management: Establish a process for modifying the schema. Changes should be reviewed and approved, not applied ad-hoc.

Performance Considerations 🚀

While the ERD is logical, it must support physical performance goals. Certain design choices have direct performance implications.

- Join Complexity: Minimize the number of joins required for common queries. Complex joins can strain the query optimizer.

- Partitioning Readiness: Design tables with partitioning in mind if the dataset is expected to grow massive.

- Searchability: Ensure fields that are frequently searched are indexed. Consider full-text search requirements for text-heavy fields.

- Concurrency: Evaluate locking strategies. High-concurrency environments may require specific isolation levels or table designs.

Final Sign-off Criteria 🏁

Before proceeding to implementation, the ERD must meet specific acceptance criteria. This ensures a smooth transition from design to development.

- Completeness: All entities and relationships required by the scope are present.

- Consistency: Naming conventions and data types are applied uniformly.

- Integrity: Primary and foreign key constraints are correctly defined.

- Clarity: The diagram is readable and understandable by the engineering team.

- Approval: Key stakeholders have signed off on the design.

Adhering to this checklist ensures that the database foundation is solid. It reduces technical debt and facilitates smoother development cycles. A well-reviewed ERD is the first step toward a resilient data architecture.

Reviewing the ERD for Future Scalability

Designing for the present is insufficient. The data model must accommodate growth without requiring a complete rebuild.

- Horizontal Scaling: Consider how sharding might affect relationships. Foreign keys across shards are complex and often avoided.

- Vertical Scaling: Ensure data types can handle larger values. For instance, using

BIGINTinstead ofINTfor counters. - Feature Flags: Design tables to support soft feature flags. This allows new functionality to be toggled without schema changes.

- Backward Compatibility: Plan for schema migrations. Adding columns should not break existing queries.

Handling Special Cases like Temporal Data

Time is a critical dimension in data modeling. Handling history correctly is often overlooked.

- Effective Dates: For entities that change over time, include start and end dates to track history.

- Time Zones: Store timestamps in UTC to avoid ambiguity across regions.

- Snapshots: Decide if historical snapshots are required. This might necessitate a separate history table.

- Temporal Tables: Some systems support native temporal tables. Evaluate if this fits the architectural constraints.

Security and Compliance in the Schema

Data security begins at the table level. The structure must support privacy and protection requirements.

- PII Handling: Identify Personally Identifiable Information fields. These require encryption or masking.

- Access Control: Design roles and permissions based on the data sensitivity defined in the schema.

- Encryption at Rest: Ensure the database engine supports encryption for sensitive fields.

- Retention Policies: Define fields that indicate when data can be deleted according to legal requirements.

By rigorously applying these checks, the database becomes a reliable asset rather than a liability. The effort invested in the ERD review phase pays dividends in maintainability and performance.